In a previous post, I argued that role playing conversations is an ineffective way of scoping the requirements for a conversational AI use case, or for your first draft design. I realised that, while role play isn’t ideal, there are a shed load more decisions and activities that need to occur before you even think about designing a use case.

So, in this series, I’ll show you the things you should do to progress through the whole AI automation process

This is based on a combination of our experience and our recommended methodologies at VUX World, as well as through countless conversations with practitioners and enterprises across the globe over the last 6 years.

Before you start: identify the right project/programme approach

Jim Rowe-Bot recently posted about how the vast majority of failed AI deployments fail because of a project management problem. While there is plenty of opinions to back this up online, the research suggests that the real problems behind simply ‘project management’ issues, as it relates to IT and transformation projects are:

- proper practice of stakeholder management

- feasibility assessments

- the extraction and analysis of requirements

As is by magic, the methodology we use at VUX, and that I’ll begin sharing with you today, will help you accomplish the above and more, and make sure your AI automation programme is ran effectively, and gets you the results you need.

The background on why this methodology works

The methodology we use is unashamedly adapted from… The UK Government. What a strange place to look for AI methodologies, I hear you think. It is, and it isn’t.

In 2010, the UK government had over 40 different websites, all performing different actions, poorly. Martha Lane Fox, then UK Digital Champion, was asked to conduct a review of the primary government website, directgov.

In her report, Fox recommended a revolution of government digital services. A short time later, the Government Digital Services (GDS) unit was formed, with some of the best designers and python developers in the country. They set away at redesigning all of the digital services that the UK government provides and solidified a version of the agile methodology that became part of its Service Standard for government digital service development.

This methodology is a traditional agile methodology, tweaked slightly, and has proven its ability to deliver at scale within arguably one of the most complicated collection of organisations on the planet.

At the time it was implemented, not many in government knew much about the internet. Stakeholders and government workers didn’t have a clue about websites, content, online services, user centric design or any of that stuff. It was all alien.

Think about that in the context of AI. Your organisation is highly likely to be in the same situation with AI as the UK government was with the internet during 2010.

This is why this methodology is perfect for AI projects.

The methodology

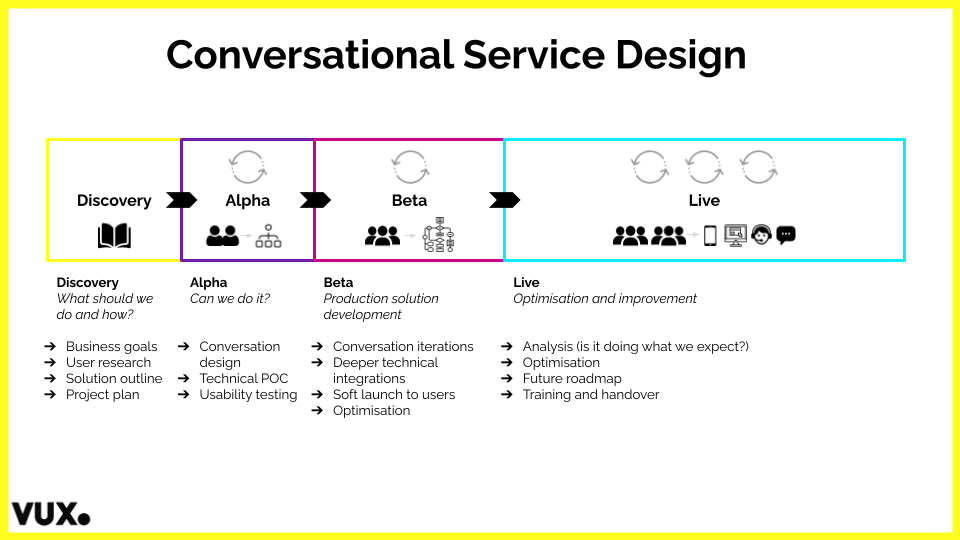

VUX World Conversational Service Design Process

First and foremost, this methodology is about managing risk and taking one step at a time to a) prove value and b) make sure that the solution meets user needs. You might not go through this process religiously, and for some projects, the phases will blend or condense, but the general framework is one that’s proven to deliver results in conversational AI and beyond.

Here’s a high level overview on how it works:

Discovery

This phase is for getting your ducks in a row, planning your project or programme, understanding your requirements, your user needs, the feasibility of implementing your solution and making sure you can deliver on business value.

This Discovery phase doesn’t mean that you’re committing to delivering an AI project. It means that you’re assessing the business problem, user needs and validity of such a project.

During discovery, you should define and clear up the business problem to be solved, and the user journey it relates to. You’ll also need to understand the constraints you’re operating within. Do you have the technology integrations you need? Do you have the policies in place that’ll enable you to design the service you need to? Are there limitations with third party suppliers? Do you have the right support from the right people? Finally, you’ll identify the improvements AI could make, outline the solution you intend to build and determine its feasibility.

Typical Discovery activities are:

- defining your user needs

- deciding what use cases you should automate

- planning and estimating your roadmap

- assessing the feasibility of your plan and initial use case

- deciding your approach and whether to buy, build or partner

- putting together a business case

- scoping the requirements of the conversation/s to be had

- validating those conversations

- defining and documenting your requirements

- determining the feasibility of executing them

At the end of the Discovery phase, you’ll decide whether it’s worth progressing to Alpha based on your business case and whether you believe you can create the improvements you aim to.

Alpha

You’ve probably heard the term ‘Alpha’ before. And if you haven’t, you’ve certainly heard of Proof of Concept (POC). The Alpha phase is where you design and test your most riskiest components of your solution and validate that its possible to design and build.

This may involve tackling your ‘must have’ requirements of your AI assistant. It means becoming confident in your ‘happy path’ i.e. the primary journey that’ll resolve the needs of most users. If you’re integrating into business systems, this is the time to make sure the APIs do what you need by building a version of your assistant using these APIs, or even building these APIs if you don’t have them. If your solution relies on the cooperation of third party suppliers, now is the time to engage them to make sure they can do what you need.

In Alpha, you want to prove that you can a) deliver the broad scope outlined in Discovery, b) do so in your production systems and c) do so whilst maintaining the integrity of the user experience.

Typical Alpha activities are:

- iterating and testing your design

- identifying the scope of what’s to be built

- selecting the right technology

- testing or building the required APIs for system integrations

- iterating and implementing a version of the use case in your chosen software to validate feasibility

- ensuring the integrity of the user experience

At the end of Alpha, you should be confident that you can build and implement the whole solution within the timelines and pricing you’ve planned. You should have evidence that implementing the solution will realise your business case, that it’s feasible to do, and you should know who and what you need to deliver it.

Beta

Once you’ve validated the feasibility of being able to take your solution forward, in Beta, you’re building the real production version. You’re adding the bells and whistles to your design and building your technical solution, ready to go live. You’re also planning for how to integrate the solution into your current live service. This includes planning for training of staff who’ll manage the solution going forwards.

It’s usually a good idea to put your Beta live in front of customers in a soft launch to iteratively improve ahead of a full time Live launch. This will enable you to gather real feedback on how to improve its performance.

Also in Beta, you should be planning for how to scale your solution into other use cases and channels without having to start again from scratch in future.

Typical Beta activities include:

- designing the full scope of your use case

- building the full technical solution

- testing and quality assuring your solution

- understanding how to measure its performance

- iteratively improving your assistant during soft launch

At the end of the Beta phase, you should have everything in place to go live. It’s often not feasible to built the entire solution in Beta, as you’ll be always prioritising the next Must Have requirements. Therefore, by the time you go live, you should have a backlog of improvements and new features or other constraints that you’ll aim to tackle when Live.

Live

I’ve said plenty of times that going Live is the beginning of your AI project. This phase involves everything required to complete when going live into production full-time and making sure that you have the skills and tools needed. That means making sure you have the team and resources required to manage and sustain the solution in the long term.

Live activities include:

- launching and deploying your use case on your channel/s of choice

- managing and improving your assistant on an ongoing basis

- reporting and proving the value of your assistant to stakeholders

- increasing user adoption

- shaping and implementing future roadmap items

Going deep

Over the next few weeks and months, I’ll be diving deep into each of these phases and outlining the process and activities we follow at VUX, and that you can follow to take your AI strategy from an idea of “We need our own version of ChatGPT” to the reality of having an AI programme that’s delivering value.

Whether you’re a seasoned AI or technology veteran, or whether you’re just starting to explore AI today, this series will make sure that you’re taking the right steps, in the right order, with the right people to deliver the right value.