Here’s a run through of all the new updates to Siri announced at WWDC 2020.

Unlike last year at WWDC19, there weren’t any big anticipations, though we’re always secretly hoping to see total third party access to Siri, with the ability to create full conversational applications for Apple’s digital assistant.

Siri’s learning

A few stand out stats dropped during the keynote by Yael Garten, Director, Siri Data Science and Engineering, show that Siri is well used by consumers, and that its getting smarter.

According to Yael, Siri processes over 25bn requests each month, and has 20x more facts than 3 years ago.

It’s nice to see some numbers from Apple, though it would be great to understand monthly active users, too.

Siri processes over 25bn requests each month, and has 20x more facts than 3 years ago. Click To TweetGoogle announced in January that it has 500m monthly active users of Assistant. To use that as a benchmark, if Apple had the same number of monthly active users, that’d equate to 50 uses of Siri per month, per user, on average.

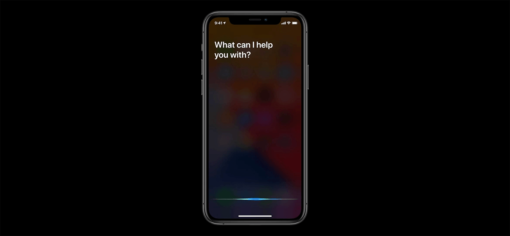

Siri’s face lift

The first enhancement to Siri is the visual interface.

Usually, when you summon Siri, your entire screen is taken over by the digital assistant.

This can be distracting, take you off task and slow down your productivity.

Now, in iOS 14, Siri is taking a more subtle presence on screen.

Instead of taking up your entire screen, the animated Siri logo makes an appearance at the foot of the screen when you invoke it.

This means that you’re able to use Siri without switching tasks.

This is similar to how you might use your smart speaker: as an additional device to get quick answers or get things done without having to stop what you’re doing entirely.

You might think that this isn’t a big deal. And it’s not a BIG deal, but if you use Siri multiple times per day, every little helps. It’s these small things that add up to a great experience over the course of 20 interactions.

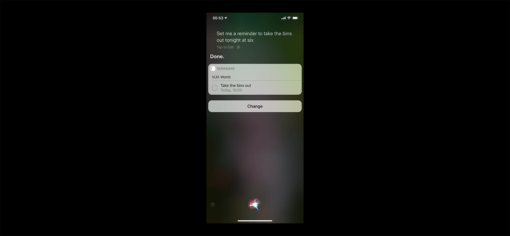

Subtle confirmations

When Siri takes an action on your behalf, to confirm that it’s done what you asked it to, you’d usually hear an audible confirmation, as well as see a visual confirmation that covers up your entire screen.

With iOS 14, these confirmations are taking the same subtle backseat as the Siri animated logo.

Instead of the confirmation taking over the entire screen, you’ll see a small confirmation widget overlay at the top of your screen.

This means that you can understand whether the assistant has completed your task without you needing to pause what you’re doing.

Another nod towards Siri moving out of the way, being an enabler, rather than the star of the show.

Another nod towards Siri moving out of the way, being an enabler, rather than the star of the show. Click To TweetEnhanced knowledge base

One of the frustrating things about Siri over the years has been its inability to answer simple queries natively.

In almost all studies that compare leading voice assistant ability, Siri finishes last.

Given that asking general questions is one of the most popular voice assistant use cases, this hasn’t boded well for Siri.

Usually, when Siri can’t answer your question, it’ll just give you a load of search results for you to go and fend for yourself.

Yael Garten mentioned that Siri has added to its knowledge base by indexing answers from a greater array of websites, giving it 20x more facts than 3 years ago.

It’s not 100% which websites are being indexed, or how developers and brands can optimise their own websites to increase their chances of being served through Siri, but it’s a step towards that potential.

Send audio messages with audio commands

In iOS14, you have the ability to ask Siri to send audio messages through iMessage.

This isn’t a ground breaking addition, but it might get people using audio messages more. Fusing the access, creation and sending of audio messages together in one audible interaction makes it quicker and frictionless.

Fusing the access, creation and sending of audio messages together in one audible interaction makes it quicker and frictionless. Click To TweetOn device processing of speech to text

Probably one of the biggest changes, at least for the speech technology enthusiasts, is that Apple will be processing automatic speech recognition (ASR) within its Dictate feature on-device.

This means that when you dictate a message, Siri will transcribe your audio into text without requiring a cloud connection.

Not only is that a quicker way of transcribing text, but it’s also more secure, as it doesn’t require your voice to be sent to Apple servers for processing.

When you dictate a message, Siri will transcribe your audio into text without requiring a cloud connection. Click To TweetGoogle announced this same functionality last year at IO’19. It’s been running on the Pixel 4 since October ’19. And it’s certainly quicker.

I don’t think that people appreciate how different the voice to text experience on a Pixel is from an iPhone. So here is a little head to head example. The Pixel is so responsive it feels like it is reading my mind! pic.twitter.com/zmxTKxL3LB

— James Cham ✍🏻 (@jamescham) May 27, 2020

It’s unclear how this model will be deployed. Google had to release an entirely new phone to house the power needed to run its AI on-device, though it does a bit more than ASR.

It’s likely that Apple will include this in the iOS 14 update and it’ll be confined to Dictate for now. When asking Siri to do something, it’ll still send your audio to the cloud for intent recognition, for now at least.

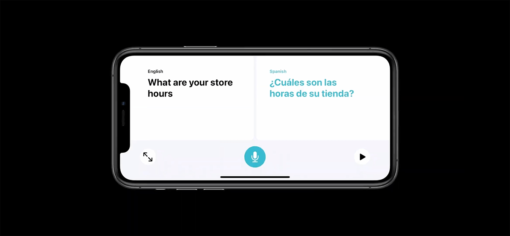

Translate

Another feature that’s existed on Google Assistant since the backend of last year is Interpreter Mode.

This enables real time translation of speech from one language to another, enabling two people that speak two different languages to have a near real time conversation with Google Assistant doing the translating.

Well, in iOS 14, Siri will be able to do the same.

Translate also has Conversation Mode, which facilitates a conversation between two people. You’ll speak to Siri in one language and it’ll repeat what you say back through the phone in your target language. Then, your partner can speak back in the target language, and Siri will repeat it back in the original language.

Apple tends to be late to some innovations, but better late than never.

Subtle, but expected

There are two themes running through the updates to Siri from WWDC 2020:

- Siri is becoming more subtle

- Siri is keeping up with what’s expected

Rather than Siri being centre stage and commanding your full attention, it’s taking a step into the background, getting out of the way and letting you get on with your life. That’s the true power of ambient computing, and these announcements show that Apple is thinking of Siri in the right way; ever present, ready, but subtle.

Then, it’s keeping up with the direction of voice assistant innovations (and expectations) set by Google. On-device processing, translating in real time and enhancing its knowledge base.

These updates to Siri aren’t innovations, they’re follower moves, but required to keep pace with Google.

These updates to Siri aren’t innovations, they’re follower moves, but required to keep pace with Google. Click To TweetAlthough we didn’t see what the voice community was hoping for: full third party access to Siri development tools to enable anyone to create bespoke conversational experiences, these new changes have at least taken it a step forward for consumers. This should help increase confidence and engagement in Siri usage, which has a net benefit for anyone creating voice assistants and voice user interfaces.

The more comfortable people get with talking to their devices, the more likely they’ll talk to your company in future.