Has Amazon’s latest Lex feature: Automated Chatbot Builder, just solved conversation design for everyone?

For anyone who’s ever designed any conversational interface, ever, you’ll know that you live and die by your training data.

Researching how your customers speak, as it relates to your use case, and including that language in your NLU training data, is imperative. Without real data from real people, your chat or voice app simply won’t understand people as well as it should. Perhaps, not at all.

Now, you can often get away with limited training data if you’re using an AI platform with ‘pre-built’ intents or entities. For example, most platforms are tuned to pick out things like addresses, numbers, dates, names and other common pieces of information. Google is fairly good in this department.

Some platforms also have templates that’ll structure a conversation for you as a starter for ten. Microsoft have some decent templates for things like meeting room booking assistants, for example.

But these are all general use cases that don’t rely on specific industry or product-related use cases.

What happens when you’re creating a ‘freeze my bank card’ use case. Or putting together an insurance quote? Checking to see if a prescription is ready for collection? Asking about pricing and stock availability or delivery times for a specific product? All of this requires specific domain knowledge.

Usually, to create a language model for a specific use case like this, you need to conduct a lot of research and perform a lot of testing.

You need to research what your users are likely to say to the bot and, crucially, how they say it.

If you’re lucky, you’ll have a place to start. You’ll have call recordings, live chat transcripts. Failing that, you’ll shadow call centre agents, review emails, pour over the website search data, conduct customer interviews.

It takes a lot of effort for both conversation designers and AI engineers. But it’s necessary.

Introducing Amazon Lex Automated Chatbot Designer

Imagine if you could streamline this research process. Instead of manually reviewing hundreds of chat transcripts and pulling out utterances to populate NLU training. Imagine if there was a way to simply just ingest a bunch of transcripts and have you intents and training data automatically created.

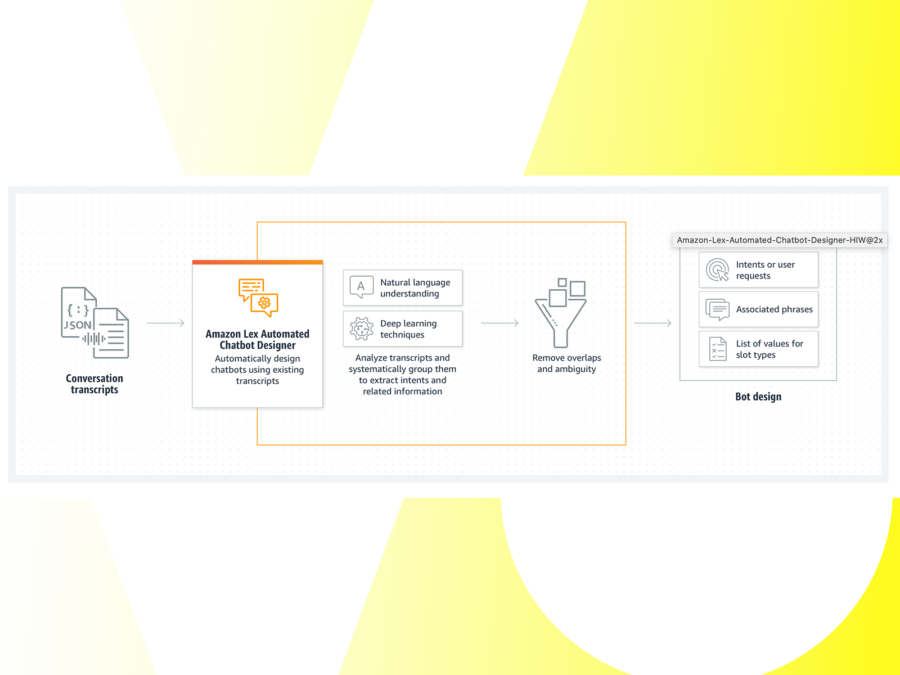

Well, that’s what Amazon has just released with Amazon Lex Automated Chatbot Designer.

Essentially, what it does, is it integrates with a bunch of Amazon Connect services (such as phone calls and Facebook Messenger), pulls out chat and call transcripts into Lex, clusters customer utterances together and suggests all of the intents and entities your bot should need.

This is certainly streamlines the design process and is a huge leap forward in sourcing and creating conversational interfaces using actual customer data.

It hasn’t quite cracked conversation design once and for all

Designers will still need to sense check the output, write bot prompts, arrange all of this into some kind of structure (depending on your use case), create the content or integrations required to have the bot do what’s intended for the user and conduct testing.

So, it hasn’t quite cracked conversation design once and for all. However, it takes a large headache out of the process, which is a great step forward.