Divergence within the conversational AI market is necessary. It creates differentiation. Here’s a look at how that’s happening, where it’ll converge in future and why that’s important.

The world moves in waves. Ups and downs, ins and outs, peaks and troughs. Divergence and convergence.

These patterns occur in every area of life. You have good moods and bad moods, births and deaths, consciousness and sleep, preparing and eating.

It happens in markets. Stocks are up, then they’re down. Supply is abundant, then it’s short. It’s a buyer’s market, then a seller’s market. And around we go.

The birth of divergence in conversational AI markets

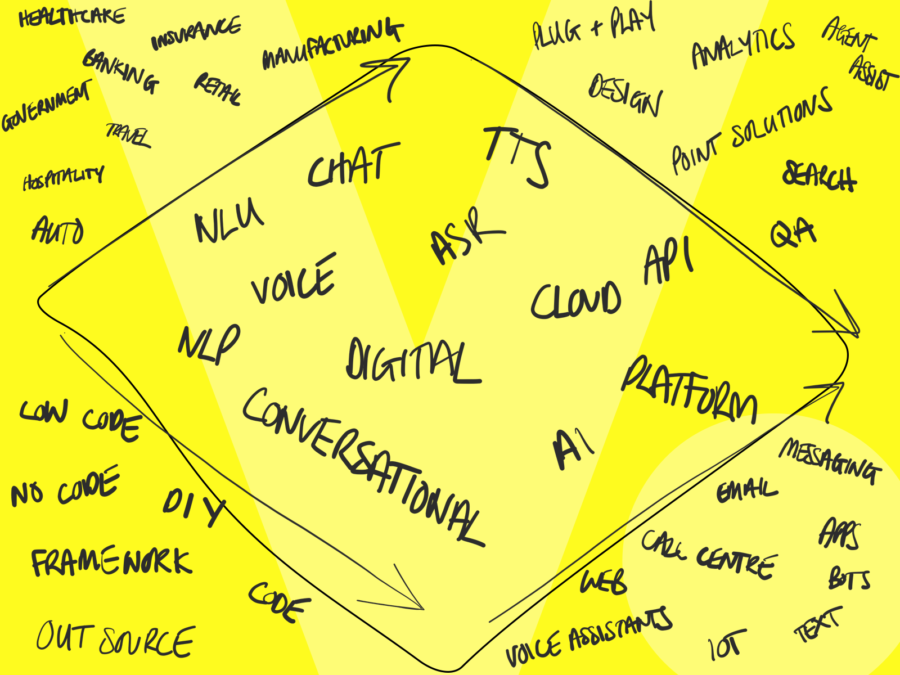

Right now, in this ‘talking computers’ sub-field of artificial intelligence, we’re in a phase of divergence. Diverging so much that there’s no sign of us even agreeing on what to call it.

‘AI’ is too broad. ‘Voice’ excludes chat and ‘Chat’ excludes voice. ‘Digital’ isn’t conversational and ‘conversational’ is a mouthful. ‘NLU’ is just a part of NLP and ‘NLP’ means nothings to most people. We’re diverging.

And while we’re diverging on what we call it, technology suppliers and vendors are growing at a rapid pace and are being forced into diverging on capabilities, market positioning, targeting and approach in order to find a launch pad for their products.

[bctt tweet=”Right now, in this ‘talking computers’ sub-field of artificial intelligence, we’re in a phase of divergence. Diverging so much that there’s no sign of us even agreeing on what to call it.” username=”vuxworld”]

Just as we’ll eventually settle on a shared language around NLP technologies, we’ll likely see an eventual convergence in capabilities, suppliers and services, but not yet.

Tracking the divergence

So let’s explore just how the market is diverging, so we can understand how it’ll likely converge and what that means for businesses wanting to utilise NLP technologies and the companies building them in future.

Capability providers

First, you have providers that are breaking down the entire conversational AI pipeline and specialising on specific components, with the aim of squeezing the most performance out of that particular piece of the jigsaw.

Companies like Synaptics that specialise in wake-word detection, far-field mic technology and signal processing, all aimed at getting the cleanest signal into your speech recognition engine.

Speech recognition providers like Deepgram that specialise in producing the highest accuracy speech-to-text results with the quickest response times aimed at feeding the NLU with the highest level of accuracy.

Sentiment analysis providers like OTO that specialise in understanding the emotional state of the user, and identification providers like Pindrop that specialise in voice biometrics and authentication.

NLU providers like Rasa that specialise in the highest accuracy intent recognition and entity extraction.

Text-to-speech providers like Readspeaker and Resemble that specialise in producing the most human-like sounding voices to respond to customers.

Then you have a bunch of design tools behind the scenes like Voiceflow, BotMock and OpenDialog that aim to lighten the creative load behind creating applications.

Engineering tools like Bespoken that make light work of technical testing and QBox that takes the pain out of improving things once they’re live.

User research tools like Pulselabs that specialise in usability testing and quality assurance.

Integration tools like AudioCodes Voice AI Connect that allow AI agents to be integrated into specific environments like call centres with little pain.

Analytics tools like Dashbot that give you insights into what’s working and what’s not in your live applications.

All of these are individual capabilities that, when combined together, give you a full end-to-end conversational AI pipeline.

Cloud providers

You then have the big cloud providers who provide almost all of the above capabilities on their own, and you can stitch them together to form an end-to-end offering. Companies like Amazon, Google, Microsoft and IBM.

Point solution providers

You then have point solution companies that utilise some of the above components to create bespoke new products for specific market needs.

Take Balto, for example. It utilises speech recognition and advanced NLU capabilities to provide agent assist technologies to help call handlers be more productive.

Or Lucy AI that utilises NLU solutions to search through enterprise documents and extract unstructured and otherwise buried organisational knowledge.

Trinity Audio that utilises TTS technologies to create more accessible and wider reaching content for publishers.

Platform providers

You then have platform providers that roll-up a bunch of those technologies into as near as you can get to an end-to-end toolkit for building and implementing conversational agents.

Companies like [24]/7 AI, Kore.ai and over 2,000 others who deliver end-to-end solutions.

But even platform providers themselves have diverged in a number of ways:

Industry divergence

Some companies are targeting specific industries, like Nuance for healthcare, SoundHound for automotive, Kasisto and Clinc for banking, Hi Auto for quick service restaurants and Converse360 for local government.

Channel divergence

Others are targeting specific channels like Infobip for Whatsapp, Creative Virtual for chatbots, Sovran AI for call centres, Alan AI for apps, Speechly for the web.

Others have a multi-channel approach like Speakeasy AI, Uniphore and others.

Implementation divergence

You then have some platform providers who have a stand-off, self-service approach like Boost AI, where the platform is a tool to be used, without much need for support from the platform providers.

Others have a more involved, hands-on approach, like Poly AI where, if you want, a whole team of designers and engineers will design, build and manage your whole service for you.

Technology divergence

You have some that have proprietary technologies like Speakeasy AI and Wluper.

Others utilise cloud ecosystems like Artificial Solutions and Zammo, built on Microsoft LUIS.

Then, some let you plug and play your own NLU, ASR and TTS, like Cognigy.

Skillset divergence

You then have some platforms that are intended to be low-code and accessible like MindSay.

Others require more technical skills, but are more powerful, like Teneo.

Others are purely frameworks like Jovo and some require specialist vendor support like Seekar.

Divergence is unavoidable

You see, the industry is diverging because all of these providers are trying to find a space in the market that hasn’t been taken yet. It’s the only way you can differentiate.

“We do call centres better than anyone else” is a very specific message that means something to someone looking to automate calls.

“Voice AI for drive-through” is an easier sell to that specific market than “general tool that you can use for whatever you like”.

The value of diverging conversational AI technology

There’s obvious value in these differentiated and divergent approaches. An NLU trained on thousands or millions of drive-through ordering conversations is going to perform much better than an general NLU trained on a few sample utterances pulled out of a designer’s head.

Other providers that focus on specific industries are able to offer more compelling services that solve the specific needs of that industry. Webio’s ability to handle specific debt collection conversations, make outbound debt recovery calls and even take payments, is a tremendously compelling offering, compared to the build-it-yourself alternative.

[bctt tweet=”An NLU trained on thousands or millions of drive-through ordering conversations is going to perform much better than an general NLU trained on a few sample utterances pulled out of a designer’s head.” username=”vuxworld”]

Queue conversational AI convergence

Even though there’s value to be found in this divergent and differentiated approach, I can’t help but feel like we’ll converge eventually.

Those who specifically focus on chat will shift into voice. We’ve already saw that with companies like Boost AI and ActiveChat.

Those who specialise in one industry, like MindSay and hospitality, will shift into other industries. We’ve saw that with Hyro. There’s simply too much opportunity to stay vertical-specific. Vertical-specific solutions, though they solve specific problems, they limit growth.

Those who specialise in Alexa will move into IVR and other channels. We’ve saw that with Voiceflow, Zammo and Voicify.

Those who specialise in technical power like Poly AI will move into accessibility and low-code options, like Cognigy, and those who specialise in no-code like TalkVia will open up more power.

Those who have proprietary technology will integrate with other more generic capabilities, and those generic capabilities will begin allowing further training and customisation.

Those who have a hands-on approach will develop a hands-off approach as the market acquires more skills, and those with a hands-off approach will develop a hands-on approach as they expand into new markets, industries and geographies.

This will likely lead to further acquisitions as provider X looks for shortcuts into market Y by buying provider Z. We’ll be left with a bunch of technology that can be used for whatever you need it for, whatever your industry or maturity level or budget.

It’s all swings and roundabouts.

The upshot?

This technology and this market is a moving beast that’ll continue to move into the future, and so the most important thing businesses can do today, is to not concern yourself with the technology too much. Focus on your business requirements and, importantly, agility. Be prepared to switch providers, try new solutions and not get stuck in your ways or with a single provider indefinitely.

For technology providers, finding a specialism to differentiate yourself will get you into the market, but be prepared for a big battle ahead as others encroach into your territory. Flexibility in your approach, and constant capability and industry expansion is going to be crucial.

As for what we’ll call it, my bet is on NLP.